I was on a call recently when someone asked how much of our code was written by AI. One of the devs replied 100%. Confident, and a correct answer, but it kind of misses the point and is the wrong thing to measure.

The person asking wasn’t an engineer. He’s smart and pays attention, and vibe-codes out prototypes himself. But in his mind, the new model is that you tell the AI what you want, and it builds it. He assumed that the work would move downstream to testing, QA, and reviewing the output.

The work didn’t just move downstream, it moved upstream as well.

To get the result that you want from the AI, you must know what you want with a specificity that wasn’t needed before. The act of thinking about and writing the specification doc has expanded. So you might spend 20 minutes working with the AI to specify exactly what you want, flushing out questions and leaving no ambiguity. Then you hit enter.

The AI writes the code. This is the part non-tech people see, not the preparation work of priming the LLM.

Downstream has also increased, and is where the damage of improper planning shows up. There is still QA testing that needs to be done, but the review of the code also becomes more time consuming. When a human writes code, the act of writing it forces a thousand small decisions, and the human leaves a trail of those decisions in the code. When an AI writes code, those decisions happened somewhere in a probability distribution and you have no way to reconstruct them. This is similar to reviewing a PR in a repo that you have no history of. It takes time to familiarize yourself with the codebase.

A few months ago I was asked to review a vibe-coded side-project React app. The app solved a real problem and I was asked what it would take to get it production ready. After about 15 minutes of reviewing, I found a single 20k+ React TypeScript file.

Twenty thousand lines. In one file.

I had to reject it at that point, because regardless of how good of a developer you are, a project organized in that way is unreviewable. This was the result of improper planning ahead of time, and trying to build and solve all the problems at once.

The bummer here is that the side-project was good. It solved a real problem and was helpful, but in its current iteration, it was mostly tech debt.

This is what nobody on the “AI makes us faster” side of the conversation is admitting. AI made it possible for a non-engineer to produce twenty thousand lines of code in an afternoon. It did not make it possible for anyone to review that same code in an afternoon. The cost didn’t disappear. It got handed to someone else.

The developer on my call wasn’t lying when he said that AI writes 100% of the code. But that misses the point because it is the wrong thing to focus on. If you watched how he works, he gets useful output because he is spending real time up front telling the AI what he wants, in specific terms, with examples and constraints and edge cases. And then spending real time afterward reading what came back and deciding which parts to keep. The 100% is the typing of the code, not all the work in the periphery.

To be clear, the middle did get faster. Something that used to take me a day can take an hour now, and that’s real. I’m not arguing AI didn’t change anything. I’m arguing that the hour I saved in the middle isn’t a free hour, because the planning got longer on one side and the review got heavier on the other. When you add it all up honestly, you’re maybe 20% faster, not 10x. The gains are real. They’re just smaller than the marketing.

So when someone asks me what percentage of my code is written by AI, the honest answer is that the question is measuring the wrong thing. Ask me instead how much longer my prompts have gotten, and how much more code I read in a week than I did two years ago. Those are the numbers that moved.

AI is useful. I use it every day on personal projects. But useful and 10x are not the same word.

I Give Advice 75% of the Time. I'm Trying to Get to 50.

I Give Advice 75% of the Time. I'm Trying to Get to 50.

20 Years of Dreamweaver, and One Long Overdue Migration

20 Years of Dreamweaver, and One Long Overdue Migration

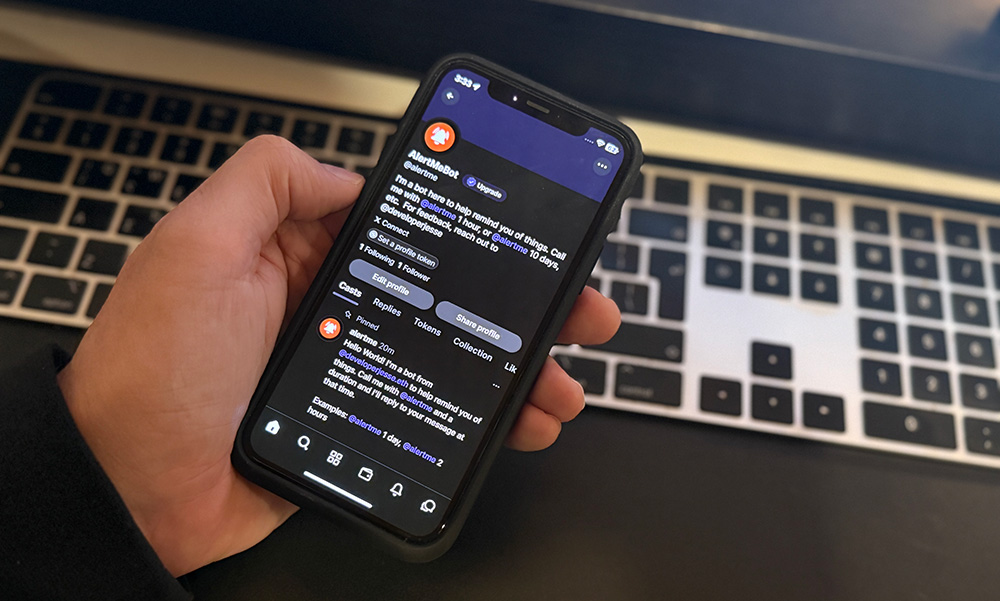

AlertMe - Farcaster Reminder Bot

AlertMe - Farcaster Reminder Bot